How to create Robots.txt file to block bots?

If we talk about bots so there are few great bots, for example, Google and Bing that you might need to permit to crawl the site.

This is simple process and it may be completed by utilizing file robots.txt in the root directory. The following is a case of a robots.txt record that blocks all bots aside from Yahoo, Google, MSN.

The robots.txt document controls how web crawler crawl webpages. Due to inappropriately arranged ROBOTS.TXT documents, the search engine will never index the file of your site. Additionally, the robots record is utilized to prevent the web index from the site.

Bots hits on your site and after that it expends a great deal of data transfer capacity and because of this your site performance will be slow. It is imperative to prevent kind of bots to handle such a condition.There is an opportunity to get a ton of traffic on your site which can cause issues, for example, substantial server load and insecure server. Introducing Mod Security modules or plugins will avert these sorts of issues.

Redressing the Robots.txt from Blocking all sites crawlers

The ROBOTS.TXT is a record that is regularly found at the root directory of the site. You can alter or edit the robots.txt record utilizing your preferred content manager. In this blog, we clarify the ROBOTS.TXT document and how to discover and alter it.

The asterisk * (reference bullet) mark with User-agent indicate that all web indexes are permitted to record the website. By utilizing the Disallow alternative, you can confine any quest bot for crawling or indexing any page. IF there is "/" after DISALLOW implies that no pages can be visited by a search engine.

By evacuating the "*" from the User-agent a likewise the "/" from the Disallow choice, you will get the site recorded on Google or another search engine and furthermore it enables the web crawler to filter your site. Coming up next are the means to altering the ROBOTS.TXT record:

You are able to use .htaccess file with given below code to block a single bad User-Agent.

You have given below code need to use If you need to block for multiple User-Agent strings at once.

If you need to specific bot so you are able to block particular bots globally. First you need to login to your WHM for this.

Then you need to go to "Apache Configuration" and select the "Include Editor" and go to “Pre Main-Include” and here select your apache version (or all versions) and paste the below code and after that you need to click on update button and at the last restart apache.

Hope this blog will assist you and reduce the server load which will improve the performance and speed of your website.

If we talk about bots so there are few great bots, for example, Google and Bing that you might need to permit to crawl the site.

This is simple process and it may be completed by utilizing file robots.txt in the root directory. The following is a case of a robots.txt record that blocks all bots aside from Yahoo, Google, MSN.

The robots.txt document controls how web crawler crawl webpages. Due to inappropriately arranged ROBOTS.TXT documents, the search engine will never index the file of your site. Additionally, the robots record is utilized to prevent the web index from the site.

Bots hits on your site and after that it expends a great deal of data transfer capacity and because of this your site performance will be slow. It is imperative to prevent kind of bots to handle such a condition.There is an opportunity to get a ton of traffic on your site which can cause issues, for example, substantial server load and insecure server. Introducing Mod Security modules or plugins will avert these sorts of issues.

Redressing the Robots.txt from Blocking all sites crawlers

The ROBOTS.TXT is a record that is regularly found at the root directory of the site. You can alter or edit the robots.txt record utilizing your preferred content manager. In this blog, we clarify the ROBOTS.TXT document and how to discover and alter it.

Code:

User-agent: *

Disallow: /By evacuating the "*" from the User-agent a likewise the "/" from the Disallow choice, you will get the site recorded on Google or another search engine and furthermore it enables the web crawler to filter your site. Coming up next are the means to altering the ROBOTS.TXT record:

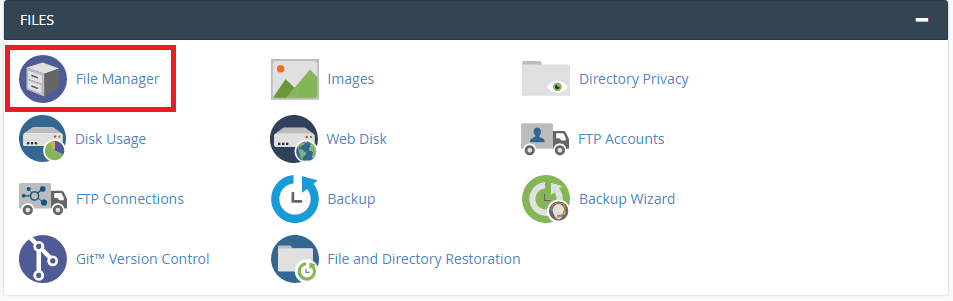

- First, you need to use correct username and password to login to your cPanel and get the interface.

- Go to your “File Manager” and go to root directory of your website

- Your index file and the ROBOTS.TXT file must be in the same location. Add the given below code and save the file.

Code:

User-agent: *

Disallow: /

Code:

RewriteEngine On

RewriteCond %{HTTP_USER_AGENT} Baiduspider [NC]

RewriteRule .* – [F,L]

Code:

RewriteEngine On

RewriteCond %{HTTP_USER_AGENT} ^.*(Baiduspider|HTTrack|Yandex).*$ [NC]

RewriteRule .* – [F,L]Then you need to go to "Apache Configuration" and select the "Include Editor" and go to “Pre Main-Include” and here select your apache version (or all versions) and paste the below code and after that you need to click on update button and at the last restart apache.

Code:

<Directory “/home”>

SetEnvIfNoCase User-Agent “MJ12bot” bad_bots

SetEnvIfNoCase User-Agent “AhrefsBot” bad_bots

SetEnvIfNoCase User-Agent “SemrushBot” bad_bots

SetEnvIfNoCase User-Agent “Baiduspider” bad_bots

<RequireAll>

Require all granted

Require not env bad_bots

</RequireAll>

</Directory>